The vSphere 5 best practices performance guide covers a few topics in relation to tuning host network performance, some of which I’ll briefly cover in this post aimed at covering the VCAP-DCA objective of the same name.

Network I/O Control

I’ve written this previous post on NIOC, so won’t go into too much detail here. NIOC allows the creation of network resource pools so that you can better manage your host’s network bandwidth. There are a number of pre-defined resource pools:

- Fault Tolerance Traffic

- iSCSI Traffic

- vMotion Traffic

- Management Traffic

- vSphere Replication Traffic

- NFS Traffic

- Virtual Machine Traffic.

You can also create user defined resource pools. Bandwidth can be allocated to resource pools using shares and limits. Once you have defined your resource pools you can then assign them to port groups.

DirectPath I/O

Like NIOC, I have written about DirectPath I/O previously here, so will keep this section brief in this post. DirectPath I/O allows guest operating systems direct access to hardware devices. For example, in relation to networking, DirectPath I/O can allow a virtual machine to directly access a physical NIC. This reduces the CPU cost normally associated with emulated or para-virtualised network devices.

SplitRx Mode

SplitRx mode allows a host to use multiple physical CPUs to process network packets received in a single network queue. This feature can improve network performance for certain types of workloads such when multiple virtual machines on the same host are receiving multicast traffic from the same source.

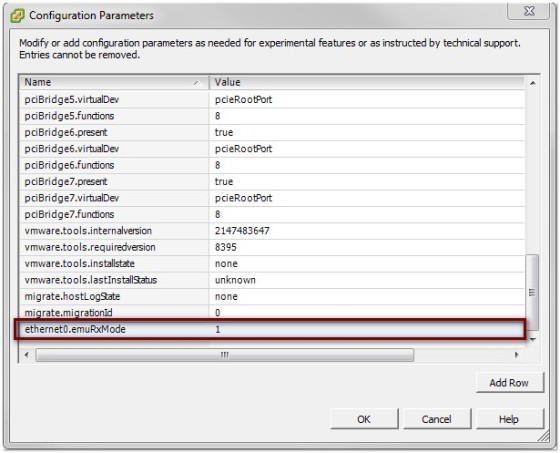

SplitRx mode can only be configured on VMXNET3 virtual network adapters, and is disabled by default. It can be enabled on a per NIC basis by using the ethernetX.emuRxMode variable in the virtual machines .vmx file, where X is the ID of the virtual network adapter. Setting ethernetX.emuRxMode = “0” will disable SplitRx on an adapter, whilst setting ethernetX.emuRxMode = “1” will enable it.

To change this setting using the vSphere client, select the virtual machine then click Edit settings. On the options tab, click Configuration Parameters (found under the General section). If the ethernetX.emuRxMode variable isn’t there then you can add a new row:

The change will not take effect until the virtual machine has been restarted.

Running Network Latency Sensitive Applications

There are a number of recommendations when running virtualised workloads that are highly sensitive to network latency.

- Use VMXNET3 virtual network adapters whenever possible

- Set the host’s power policy to Maximum performance:

- Enable Turbo Boost in BIOS

- Disable VMXNET3 virtual interrupt coalescing for the desired NIC. This is done by changing a configuration parameter on your virtual machine. The variable that needs to be changed is ethernetX.coalescingScheme (where X is the number of the desired NIC). If the variable doesn’t exist, you can add it in.

Jumbo Frames

By default, an ethernet MTU (maximum transmission unit) is 1,500 bytes. A Jumbo Frame is a layer 2 ethernet frame that has a payload greater than 1,500 bytes. To enable jumbo frames you need to increase the MTU on all devices that make up the network path from the source of the traffic to it’s destination. Using a larger MTU can lessen the CPU load on hosts. When configuring jumbo frames a MTU size of 9000 is commonly used, generally because all network equipment that supports jumbo frames supports the use of this MTU size.

As mentioned above, it’s important to ensure that all devices in a network path are configured to use the same MTU for it to work effectively. With this in mind, common use cases are dedicated networks between ESXi hosts and iSCSI or NFS storage. Another usage example would be between ESXi hosts for vMotion traffic.

Using the vSphere client, you can configure jumbo frames by going to the host’s networking configuration page, edit the vSwitch properties, changing the MTU size to 9000:

You will also need to change this setting for any other vSwitch on which you wish to enable jumbo frames. On a distributed vSwitch you can change the MTU on the advanced settings screen:

The next step, after configuring the vSwitch, is to change the MTU of the VMKernel ports that you wish to use jumbo frames with. To do so, navigate to the virtual adapter and edit the settings, changing the MTU to 9000:

This setting will need to be changed on all VMK ports for which you wish to enable jumbo frames.

Configuring Jumbo Frames using the CLI

You can also change MTU sizes using the CLI. For example:

esxcfg-vswitch --mtu 9000 vSwitch0

And to set a different MTU for a VMKernel port:

esxcfg-vmknic --mtu 9000 "PortGroup Name"

You can also use esxcli to change the MTU. For example:

~ # esxcli network vswitch standard set -m 9000 -v vSwitch0

~ # esxcli network ip interface set -m 9000 -i vmk0